Master evaluation metrics for AI to optimize performance

Master evaluation metrics for AI to optimize performance

Choosing the right evaluation metrics for your AI system can feel like navigating a maze blindfolded. You might trust a benchmark score that looks impressive, only to discover your model fails in production. Or you rely on accuracy alone, missing critical nuances like bias or real-world applicability. Understanding evaluation metrics goes beyond chasing high numbers. It requires recognizing what each metric reveals, where it falls short, and how to balance quantitative scores with qualitative judgment. This guide walks you through fundamental classification and regression metrics, explores advanced benchmarks for LLMs and vision AI, exposes common pitfalls like surface bias and contamination, and delivers practical steps to refine your evaluation strategy for genuine performance optimization.

Table of Contents

- Key takeaways

- Fundamental evaluation metrics for AI models

- Benchmarks for advanced AI systems and challenges

- Common pitfalls and nuances in AI evaluation metrics

- Practical guidance for AI engineers choosing and applying evaluation metrics

- FAQ

Key Takeaways

| Point | Details |

|---|---|

| Fundamental metrics | Core metrics like accuracy precision recall F1 MAE MSE RMSE and R squared quantify how closely predictions match ground truth. |

| Benchmark saturation | Benchmarks for large language and vision models tend to saturate at high scores making small differences statistically noisy. |

| Bias and contamination | Evaluation must address bias contamination and evolving refinements to avoid overestimating real world performance. |

| Quantitative and qualitative balance | Balance quantitative scores with qualitative judgment and error visualization to guide deployment decisions. |

Fundamental evaluation metrics for AI models

When you build or deploy an AI model, you need a reliable way to measure how well it performs. Common evaluation metrics for traditional machine learning tasks include accuracy, precision, recall, and F1-score for classification problems, plus mean absolute error (MAE), mean squared error (MSE), root mean squared error (RMSE), and R² for regression tasks. These metrics form the bedrock of evaluating model performance because they quantify how closely predictions match ground truth.

For classification, accuracy measures the proportion of correct predictions across all classes: Accuracy = (TP + TN) / Total. Precision focuses on positive predictions: Precision = TP / (TP + FP), telling you how many flagged items are truly positive. Recall captures sensitivity: Recall = TP / (TP + FN), revealing how many actual positives you caught. F1-score harmonizes precision and recall: F1 = 2 × (Precision × Recall) / (Precision + Recall), balancing both when class distributions are skewed.

For regression, MAE averages absolute differences between predicted and actual values: MAE = mean(|y_true - y_pred|). MSE squares those differences: MSE = mean((y_true - y_pred)²), penalizing larger errors more heavily. RMSE takes the square root of MSE: RMSE = sqrt(MSE), returning error in original units. R² indicates the proportion of variance explained by the model: R² = 1 - (SS_res / SS_tot), where values closer to 1 suggest better fit.

| Metric | Formula | Use case | Strengths | Limitations |

|---|---|---|---|---|

| Accuracy | (TP+TN)/Total | Balanced classes | Simple, intuitive | Misleading with imbalance |

| Precision | TP/(TP+FP) | Minimize false positives | Focuses on positive quality | Ignores false negatives |

| Recall | TP/(TP+FN) | Minimize false negatives | Captures sensitivity | Ignores false positives |

| F1-score | 2×(P×R)/(P+R) | Imbalanced data | Balances precision and recall | Less interpretable than components |

| MAE | mean(abs(y_true - y_pred)) | Robust to outliers | Easy to understand | Doesn’t penalize large errors heavily |

| MSE | mean((y_true - y_pred)²) | Penalize large errors | Differentiable, smooth | Sensitive to outliers |

| RMSE | sqrt(MSE) | Error in original units | Interpretable scale | Still sensitive to outliers |

| R² | 1 - SS_res/SS_tot | Explained variance | Scale-independent | Can be negative, complex with non-linear models |

Key considerations when choosing metrics:

- Match the metric to your problem context: use precision when false positives are costly, recall when false negatives matter more.

- For imbalanced datasets, accuracy alone is deceptive; prefer F1-score or area under the ROC curve.

- In regression, choose MAE for robustness to outliers or RMSE when large errors are particularly harmful.

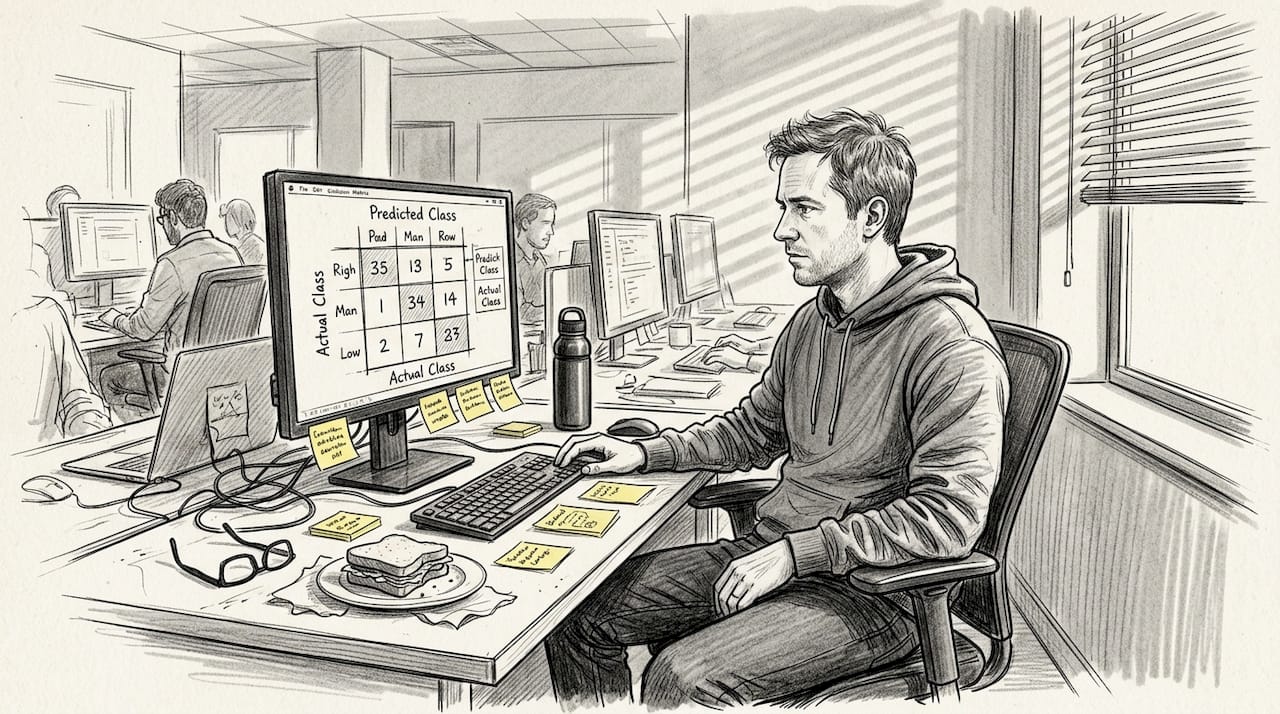

- Always inspect confusion matrices or residual plots alongside summary metrics to catch hidden issues.

Pro Tip: No single metric tells the whole story. Combine multiple metrics and visualize errors to understand trade-offs. For example, a high accuracy model might still have poor recall on rare but critical classes, so inspect per-class performance before deploying.

Benchmarks for advanced AI systems and challenges

As AI systems grow more sophisticated, especially large language models and vision models, standardized benchmarks become essential for comparing capabilities. Popular LLM benchmarks include MMLU (Massive Multitask Language Understanding), which tests knowledge across 57 subjects; HumanEval, which measures code generation accuracy; and GSM8K, which evaluates grade-school math problem solving. For vision AI, ImageNet remains a cornerstone for image classification, while VQAv2 assesses visual question answering.

These benchmarks offer a common yardstick, letting you track progress and compare models objectively. Yet they face a critical challenge: saturation. When top models score 90% or higher, the benchmark loses its power to differentiate cutting-edge systems. Small score differences become statistically noisy, and incremental improvements no longer reflect meaningful capability gains. This saturation effect makes it harder to identify which model truly excels in real-world deployment.

| Benchmark | Task focus | Typical top scores | Limitations |

|---|---|---|---|

| MMLU | Multitask language understanding (57 subjects) | 85-90%+ | Saturation reduces differentiation; knowledge recall doesn’t equal reasoning |

| HumanEval | Code generation correctness | 80-90%+ | Limited problem diversity; pass@k inflates perceived performance |

| GSM8K | Grade-school math reasoning | 85-95%+ | Narrow domain; doesn’t test complex multi-step reasoning or ambiguity |

| ImageNet | Image classification (1000 classes) | 88-92%+ | Saturated; biased toward certain object types; doesn’t measure robustness |

| VQAv2 | Visual question answering | 75-80%+ | Answer distribution bias; doesn’t capture nuanced visual reasoning |

Beyond saturation, benchmarks suffer from several limitations:

- Benchmark contamination: training data may inadvertently include test examples, inflating scores artificially.

- Construct validity concerns: high scores on a benchmark don’t guarantee the model possesses the underlying skill the benchmark claims to measure.

- Narrow task scope: benchmarks often test isolated capabilities, missing the integration and adaptability required in production.

- Cultural and linguistic bias: many benchmarks reflect Western, English-centric data, limiting generalization to diverse populations.

Pro Tip: Treat benchmark scores as starting points, not final verdicts. Supplement them with domain-specific evaluations, adversarial testing, and real user feedback. If a model scores 95% on GSM8K but fails on your custom business logic problems, the benchmark score is misleading. Build your own evaluation optimization frameworks tailored to your use case.

Common pitfalls and nuances in AI evaluation metrics

Even well-designed metrics can mislead you if you ignore subtle but critical issues. One major pitfall is surface bias, particularly in code evaluation. Metrics like CodeBLEU often correlate with code form rather than functional correctness. A model might generate syntactically clean code that scores high but fails to solve the problem, or produce messy code that works perfectly yet scores low. This mismatch between metric and true quality undermines trust in automated evaluation.

Benchmark contamination is another pervasive problem. If your training data overlaps with test sets, scores inflate artificially, giving a false sense of capability. Contamination can occur through web scraping, data leakage during preprocessing, or inadvertent inclusion of benchmark examples in large-scale corpora. Detecting contamination requires vigilant auditing and diverse, held-out test sets.

Fairness and cultural bias add another layer of complexity. Vision-language models trained predominantly on Western imagery and English text may perform poorly on non-Western cultural contexts or underrepresented languages. Evaluation metrics that don’t account for demographic diversity can mask these disparities, leading to systems that work well for some users but fail others.

You must also distinguish accuracy from honesty. Accuracy measures how often predictions match ground truth, but honesty reflects whether the model’s output aligns with its internal belief or confidence. A model might give correct answers by chance or memorization without genuine understanding, or it might hedge and refuse to answer when uncertain, lowering accuracy but increasing trustworthiness. Conflating these concepts leads to poor evaluation of model reliability.

Common pitfalls to watch for:

- Metric misinterpretation: relying on a single metric without understanding its assumptions or limitations.

- Ignoring real-world outcomes: optimizing for benchmark scores while neglecting user satisfaction, safety, or ethical considerations.

- Surface bias: metrics rewarding superficial qualities like style or format over functional correctness or semantic accuracy.

- Benchmark contamination: inflated scores due to training-test overlap, reducing the validity of comparisons.

- Fairness blindness: failing to evaluate performance across diverse demographics, languages, or cultural contexts.

“Evaluation must prioritize real-world applicability and iterative refinement. Metrics should evolve alongside models, incorporating human feedback and detecting contamination early to maintain integrity and trust.”

To avoid these pitfalls, adopt a mindset of skepticism and continuous improvement. Question high scores, probe edge cases, and validate metrics against real-world AI applications. Understand that metrics are proxies, not perfect mirrors of capability. When you catch a discrepancy between metric performance and actual utility, investigate the root cause and refine your evaluation strategy.

Practical guidance for AI engineers choosing and applying evaluation metrics

Selecting and applying the right evaluation metrics requires a structured, iterative approach. Here’s a step-by-step framework to guide you:

- Define real-world goals: start by clarifying what success looks like in production. Is it user satisfaction, task completion rate, error reduction, or fairness across demographics? Align your metrics with these goals rather than chasing generic benchmark scores.

- Understand metric limitations: every metric has blind spots. Accuracy ignores class imbalance, F1-score can obscure precision-recall trade-offs, and RMSE is sensitive to outliers. Study the assumptions and failure modes of each metric you use.

- Detect contamination: audit your training and evaluation datasets for overlap. Use techniques like n-gram matching, embedding similarity checks, or manual inspection to identify leakage. Maintain separate, unseen test sets refreshed periodically.

- Iterate with human feedback: quantitative metrics alone miss nuances like fluency, relevance, or user intent. Incorporate human-in-the-loop evaluation through A/B testing, user surveys, or expert review panels. Use this feedback to refine metrics or create custom rubrics.

- Use generalizable rubrics: develop evaluation frameworks that apply across tasks and domains. For example, a rubric assessing explanation quality might include criteria like clarity, completeness, and relevance, scored consistently by human raters.

Pro Tip: Schedule regular metric audits to catch drift and bias early. As your model updates or your data distribution shifts, metrics that once made sense may become obsolete. Revisit your evaluation strategy quarterly, and don’t hesitate to retire metrics that no longer align with your goals.

Balancing quantitative scores with qualitative judgment is crucial. Numbers provide objectivity and scale, but human insight captures context, edge cases, and ethical considerations. When a model scores 92% on a benchmark but users report frustration, trust the users. Investigate the gap, and adjust your metrics to reflect what truly matters.

Integrate real-world applicability from the start. Don’t wait until deployment to discover your evaluation strategy was flawed. Test models on diverse, representative data, simulate production conditions, and measure performance on tasks that mirror actual use cases. This proactive approach reduces surprises and builds confidence in your system’s reliability.

For deeper learning, explore agent evaluation frameworks that combine multiple metrics, human feedback, and iterative refinement. These frameworks offer a holistic view of performance, helping you optimize not just for scores but for genuine impact. Prioritize real-world metrics that reflect user needs, business outcomes, and ethical standards, ensuring your AI systems deliver value beyond the lab.

FAQ

What are the best evaluation metrics for AI classification tasks?

Accuracy, precision, recall, and F1-score are generally best, chosen based on class balance and error costs. For balanced datasets, accuracy offers a simple summary. When classes are imbalanced or certain errors are more costly, precision minimizes false positives, recall minimizes false negatives, and F1-score balances both.

How do benchmarks like MMLU and ImageNet influence AI evaluation?

Benchmarks offer standardized tests reflecting performance on complex tasks, enabling objective comparisons across models and research teams. However, saturation at high scores limits their ability to distinguish top models effectively. When scores cluster near 90%, small differences become noise, and benchmarks fail to capture real-world deployment challenges or nuanced capabilities.

What challenges arise from surface bias in AI code evaluation metrics?

Metrics like CodeBLEU often correlate with code style or structure, not functional accuracy. This can mislead evaluations if functional correctness is the goal. A model might produce syntactically elegant code that fails to solve the problem, scoring high on form-based metrics while delivering no real value.

How can AI engineers avoid pitfalls in metric contamination?

Regularly audit datasets and benchmarks for overlap with training data, using n-gram matching or embedding similarity checks to detect leakage. Use diversified test sets and keep evaluation iterative with fresh data, refreshing held-out sets periodically. Incorporate human-in-the-loop review to validate metric integrity, ensuring scores reflect genuine capability rather than memorization. Explore evaluation optimization frameworks that emphasize contamination detection and real-world applicability.

Want to learn exactly how to build AI evaluation systems that actually measure what matters? Join the AI Engineering community where I share detailed tutorials, code examples, and work directly with engineers building production AI systems.

Inside the community, you’ll find practical evaluation strategies that go beyond benchmark scores, plus direct access to ask questions and get feedback on your implementations.

Recommended

- AI Performance Optimization: Make Your AI Systems Fast and Efficient

- AI Agent Evaluation Measurement Optimization Frameworks